TL; DR

Quantum computing will break encryption and expose blind spots in how organizations track and govern cryptographic assets. Before migrating to post-quantum algorithms, you must find, inventory, and understand the cryptography already embedded in your software. SCANOSS’s Crypto Finder, powered by the SCANOSS Encryption Dataset reveals hidden algorithms, enabling proactive governance and compliance with emerging regulations like NIS2 and the Cyber Resilience Act.

Post-quantum cryptography starts with knowing what you have

The conversation about quantum computing tends to collapse into a single scenario: Shor’s algorithm breaks RSA, everything encrypted today becomes readable tomorrow. That threat is real. But it frames quantum risk as a future confidentiality problem, when the more immediate challenge is governance.

Why does quantum computing threaten more than encrypted data?

Modern software supply chains don’t just use encryption to protect data at rest. They depend on cryptographic signatures to verify that code is authentic, key exchange mechanisms to secure communications between services, and integrity checks to confirm that software hasn’t been tampered with in transit or at rest.

When quantum computing weakens the algorithms underpinning these functions, the damage extends well beyond confidentiality. If a digital signature can’t be trusted, neither can the software it validates. That affects code signing, package verification, update mechanisms, and any system where trust is established cryptographically.

The threat isn’t theoretical in terms of preparation timelines. Adversaries are already running harvest-now-decrypt-later attacks — storing encrypted data today to decrypt once quantum capability matures. The EU’s NIS2 Directive requires organisations to demonstrate cryptographic governance by October 2026. The question now is whether organisations will have done the groundwork in time for migration.

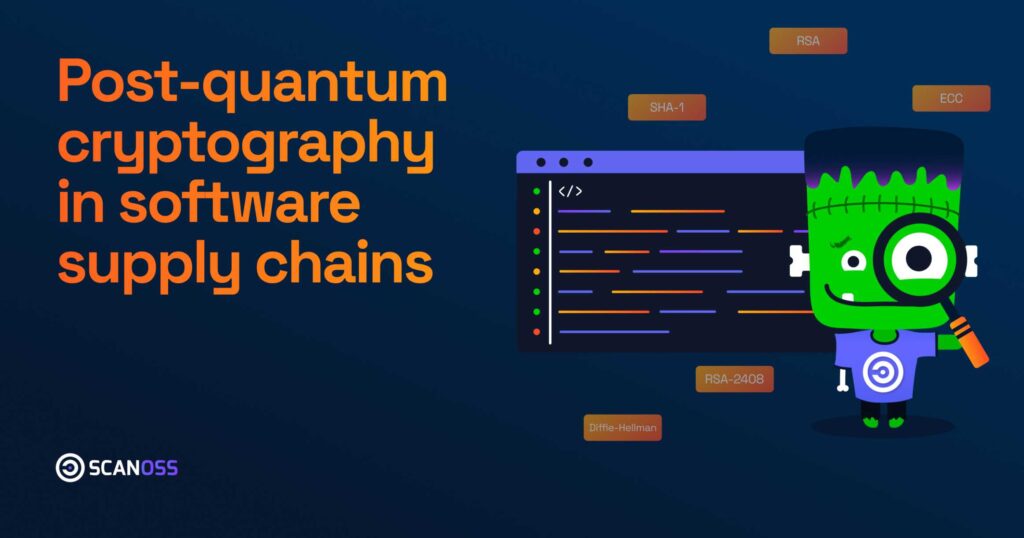

What makes cryptographic assets so difficult to find?

The challenge is that cryptography is everywhere, and much of it is invisible to standard tooling.

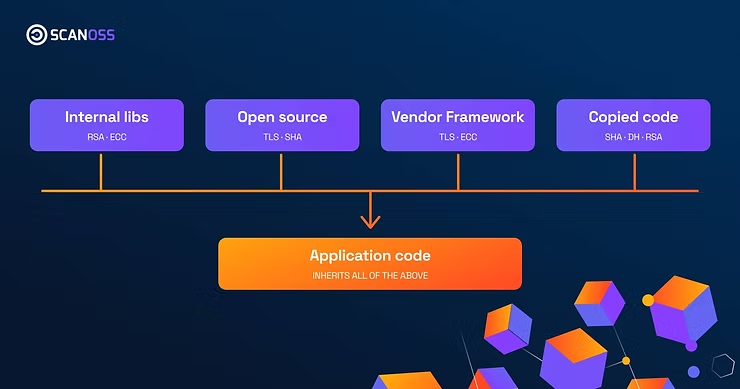

Encryption appears in IoT device authentication, cloud service integrity checks, password hashing in application logic, and digital signature verification in software update pipelines. Algorithms accumulate across codebases over years — some copied from Stack Overflow, some inherited through open-source dependencies, some embedded in internal libraries that predate current security standards. SHA-1 implementations and RSA-1024 key operations from decade-old decisions remain in production systems with no record of where they came from or what depends on them.

The standard approach to finding this cryptography relies on dependency manifests and vendor documentation. This works for declared dependencies. It doesn’t work for cryptographic functions that have been copied directly into application code, modified from their original form, or bundled inside internal libraries that don’t appear in any manifest.

SCANOSS data shows this gap is substantial. When scanning projects at source level, Crypto Finder regularly surfaces cryptographic implementations that don’t appear in any dependency declaration, they are embedded in application logic, inherited through code reuse, or present in components whose provenance has been lost entirely. These are the implementations that create the most exposure, precisely because they’re the hardest to find and track.

How does source-level detection change the picture?

Dependency scanning tells you what packages your software declares. Source-level scanning tells you what cryptographic functions your software actually executes.

Crypto Finder acts as a local orchestrator, detecting the languages in your codebase, applying the relevant rulesets to mine that code, and querying the SCANOSS Encryption Dataset for cryptographic intelligence on your open source components. The result is a single, unified CBOM that captures your full cryptographic surface, including implementations that never appear in any manifest.

This matters because the gap between what’s declared and what’s present is where quantum exposure hides. An organisation that relies solely on dependency scanning will produce an inventory that looks complete but misses the implementations most likely to cause problems during migration.

Source-level visibility is also what enables cryptographic agility — the capacity to swap algorithms without restructuring entire systems. You cannot build agility into systems whose cryptographic contents you don’t know.

What is a CBOM and why does it depend on source-level data?

A Cryptography Bill of Materials (CBOM) is a structured record of the cryptographic primitives present in a system — the hashing algorithms, key exchange mechanisms, digital signatures, and encryption routines that software relies on to establish and maintain trust.

CBOMs extend the logic of an SBOM: rather than documenting software components, they document the cryptographic foundations those components depend on. For post-quantum planning, a CBOM is the baseline document that makes migration possible. It shows which systems rely on RSA, ECC, or Diffie-Hellman, and it lets security teams assess which of those systems will require remediation as post-quantum standards mature.

A CBOM built only from dependency data will have the same blind spots as the scanning approach it came from. One built from source-level detection captures the full cryptographic surface — including the implementations buried in copied code, modified libraries, and internal components that don’t show up anywhere else.

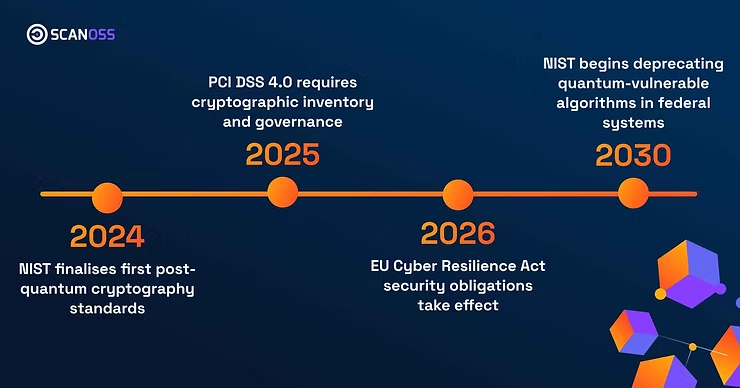

What does quantum-ready governance actually require organisations to do?

Regulatory frameworks are converging on a common expectation: organisations must be able to demonstrate that they know what cryptographic algorithms they’re running and have a plan for managing them. The EU Cyber Resilience Act, NIS2, and NIST’s post-quantum roadmap all point in this direction, with compliance timelines that are now inside most organisations’ planning horizons.

Meeting that expectation requires three things in sequence. First, a complete inventory of cryptographic implementations across the software estate — including the ones that don’t appear in manifests. Second, an assessment of which implementations rely on algorithms vulnerable to quantum attack. Third, a migration roadmap that sequences remediation based on exposure and criticality.

None of those steps are possible without the first one. Organisations that begin with incomplete inventories will produce compliance documentation that reflects what they declared, not what they’re actually running. That gap is a regulatory risk as much as a security one.

The organisations best positioned for post-quantum migration aren’t necessarily those with the most advanced quantum teams. They’re the ones that built accurate cryptographic inventories early — before the migration pressure arrived.

To explore how Crypto Finder can help you build a complete cryptographic inventory, speak with our team.