TL; DR

NIS2 expands cybersecurity accountability to include software supply chains and encryption practices. While it does not mandate specific artefacts such as SBOMs or CBOMs, organisations must demonstrate control over the components and cryptography within their systems. Code-level visibility is becoming essential to meet these expectations, reduce operational risk, and support regulatory alignment. ENISA has published Technical Implementation Guidance to support organisations in translating these obligations into operational practice — a reference point for any team working through what NIS2 compliance requires in concrete terms.

Why is NIS2 changing how organisations approach software risk?

The NIS2 Directive — whose core obligations for risk management and incident reporting are set out in Article 21, is often presented as a continuation of existing cybersecurity frameworks, but its operational impact is broader. It extends obligations beyond critical infrastructure operators to a wider set of sectors and introduces stronger enforcement mechanisms, including fines and executive accountability.

More importantly, it shifts focus towards systemic risk. As a consequence, organisations are expected to manage not only their own infrastructure but also the risks introduced through suppliers, service providers, and software dependencies. This aligns closely with the direction seen in the Cyber Resilience Act, where products with digital elements — encompassing hardware, software, subsystems, and systems — is treated as a core element of security rather than a supporting component.

In practical terms, this means that organisations must understand what software they are running, where it comes from, and how it behaves. High-level inventories are no longer sufficient. Without visibility into the actual codebase, risk assessments remain incomplete.

What does NIS2 say about encryption and why does it matter?

NIS2 does not mandate specific cryptographic standards, but it elevates encryption to a strategic priority. Under Article 7, Member States are required to define national cybersecurity strategies that include policies on encryption. These policies explicitly reference two areas.

The first is public procurement. Governments are expected to include cybersecurity requirements for ICT products and services, with encryption highlighted as a key element. This creates indirect pressure on vendors and software and hardware providers to demonstrate robust cryptographic practices.

The second is risk management. NIS2 promotes the adoption of state-of-the-art cybersecurity measures, where encryption plays a central role. This is not limited to data protection. It includes how cryptographic algorithms are selected, implemented, and maintained across systems.

The implication is clear. Organisations must be able to explain which algorithms they rely on, where they are used, and whether they remain fit for purpose. This becomes particularly relevant as post-quantum considerations and regulatory scrutiny increase.

Why are SBOMs not enough for NIS2 compliance?

Software Bills of Materials have become a common response to supply chain requirements. They provide a structured list of components, helping organisations identify dependencies and track vulnerabilities.

However, SBOMs have limitations. Many rely on declared dependencies, such as those reported in package manifests, rather than actual code analysis. They often miss copied code, modified libraries, or embedded components that are not formally tracked. In addition, they do not capture the cryptographic functions they implement.

For NIS2, this creates a gap. Organisations may have an SBOM and still lack visibility into critical risks like weak cryptography. A vulnerable library may be identified, but the specific algorithm usage within that library remains unknown. In a regulatory context, this weakens the ability to demonstrate control.

CBOMs are not required by NIS2, but they address a blind spot that the directive indirectly exposes. By focusing on cryptographic elements rather than components alone, CBOMs provide insight into the algorithms embedded within software.

Where cryptographic risk actually lives

Cryptographic risk is often inherited rather than intentionally designed. Algorithms enter systems through dependencies, vendor software, or reused code. Over time, organisations lose track of where and how encryption is implemented.

A Cryptography Bill of Materials (CBOM) surfaces this information. It identifies algorithms across the codebase — including those buried in dependencies or embedded as copied code — enabling teams to assess whether they align with current standards or regulatory expectations. We have written in depth about CBOMs separately; the point here is that when combined with SBOM data, they provide a more complete view of software risk than either can offer alone.

How does this align with broader regulatory trends?

NIS2, as a directive, does not exist in isolation. It is part of a broader regulatory movement in Europe that includes the Cyber Resilience Act and DORA, both regulations, as well as the AI Act, and sector-specific frameworks covering financial services and critical infrastructure.

Across these regulations, a common pattern is emerging. Organisations are expected to demonstrate continuous control over their software environments. Static artefacts and periodic audits are being replaced by expectations of always-on visibility and responsiveness.

The CRA, for example, places direct obligations on software manufacturers, including requirements for vulnerability handling and lifecycle management. NIS2 complements this by focusing on operators and service providers, ensuring that risk is managed across the entire ecosystem.

Encryption plays a consistent role across these regulatory frameworks. It is treated not just as a security feature, but as a governance concern. Organisations must be able to justify their choices, monitor their usage, and adapt to changing standards.

What does NIS2 compliance look like in practice?

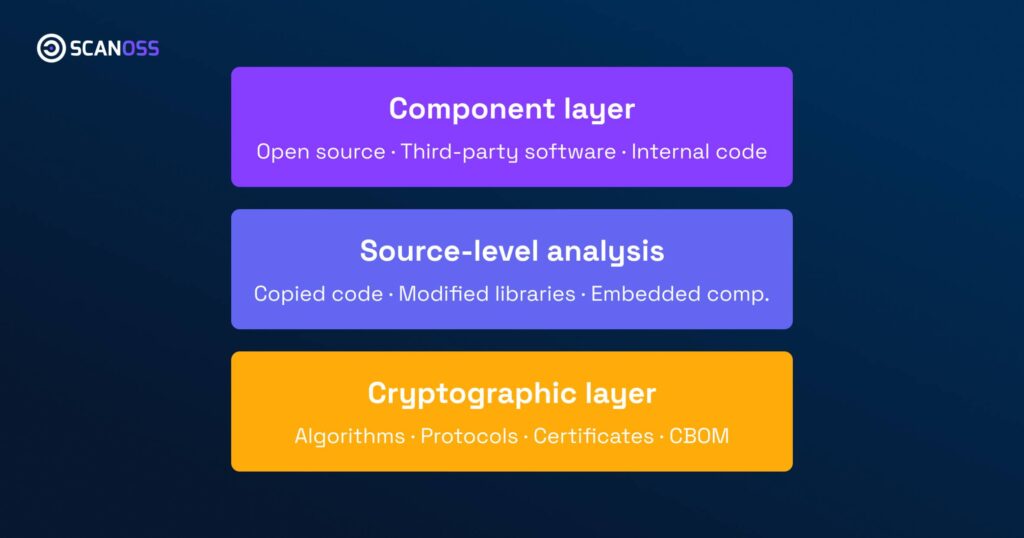

In practical terms, organisations preparing for NIS2 should focus on three areas. ENISA’s Technical Implementation Guidance offers a detailed operational framework for each of these and is worth consulting alongside any internal compliance assessment.

Visibility.

This includes understanding all software components within their systems, including open source and third-party code. It also requires insight into cryptographic implementations.

Traceability.

Organisations must be able to link software components to their origins, suppliers, and usage contexts. This supports both risk assessment and incident response.

Continuous monitoring.

NIS2 reflects a fundamental change in regulatory mind-set — one of the defining shifts from the original NIS Directive to its successor. Rather than treating compliance as a periodic exercise, the directive frames risk management as proactive and systemic, requiring always-on inventories, continuous reassessment, and timely response to new vulnerabilities or regulatory developments.

These capabilities cannot be achieved through manual processes alone. They require automated analysis and integration into development and operational workflows.

Why is NIS2 an opportunity, not just a compliance burden?

It is easy to view NIS2 as another regulatory requirement. However, it also provides a framework for improving software governance.

Organisations that invest in deeper visibility gain practical benefits. They can respond faster to vulnerabilities and other risks, reduce uncertainty in procurement decisions, and build more resilient systems. They are also better positioned to adapt to future regulations, particularly those related to cryptography and post-quantum readiness. It is worth noting that, as a directive rather than a regulation, NIS2 is transposed into national law by each Member State individually, meaning the specific implementation requirements will vary by jurisdiction.

From a business perspective, this translates into reduced risk and increased trust. Customers, partners, and regulators are placing greater emphasis on transparency. Being able to demonstrate control over software and encryption becomes a competitive advantage.

Moving from compliance to control

NIS2 does not prescribe specific tools or artefacts, but it makes one expectation clear. Organisations must understand and continuously manage the cybersecurity risks embedded in their software inventories and assets — and by consequence, the risks within the software applications that depend on them.

Achieving this requires moving beyond surface-level inventories towards always-on code-level insight.

The priority is not to produce more documentation, but to improve the quality of the information you rely on. That means building a continuous, verifiable view of software components, algorithms, and provenance.

If you are assessing how to align with NIS2 while preparing for broader regulatory shifts such as the Cyber Resilience Act, it is worth exploring how code-level intelligence can support both compliance and operational resilience. Contact us to find out how we can help.